Just started playing with one of BinaryBots latest Totem robots - Crab https://www.binarybots.co.uk/crab.aspx, which as the name suggests is a crab-like robot kit with controllable via a Microbit a claw.

It is early days playing at the moment, but some initial thoughts. You get a solid looking (and is solid) robot when it is built via a 'meccano-esque ' like construction material - Totem . A brief note on the Totem system is it is nice to build with, the design around the square nuts mean they slot into the structs and stay there - a nice feature, and the all the tools needed to build the structure come with the kit. The only thing missing from the kit is the micro:bit, but if you buying the kit you probably already have one (or more) microbits; or you can get one at the same time as buying the kit.

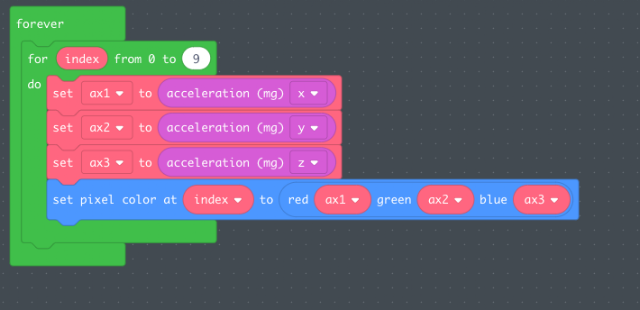

Two boards come with the kit. First one, the power board, has the battery holder and connections for motors. The second the BinaryBot sensor board has number of features I have yet to explore including two capacitive touch sensors, 4 addressable LEDs, light sensor, vibramotor for producing vibrations and a buzzer.

Playing so far!

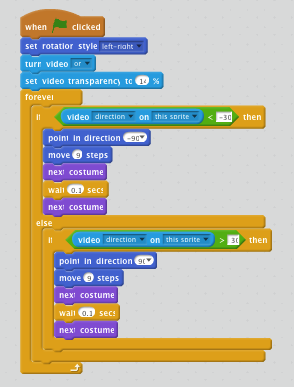

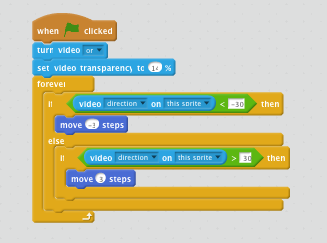

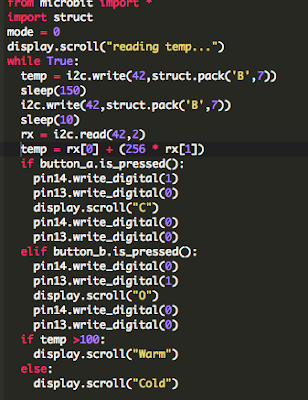

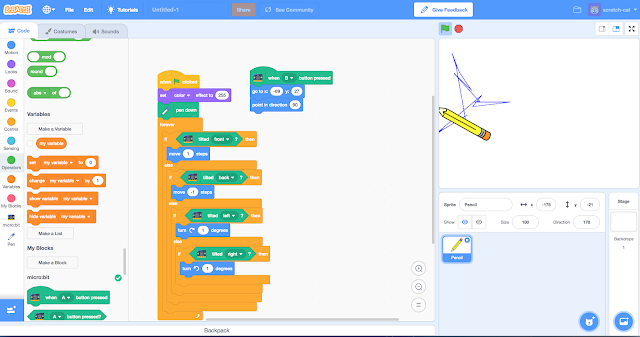

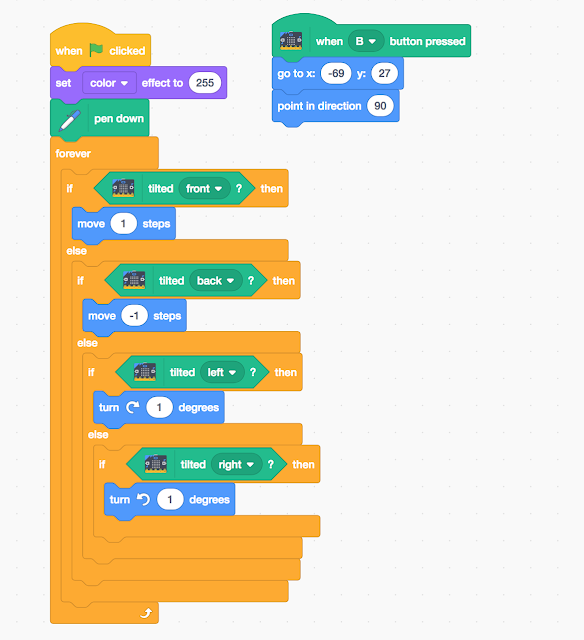

After building it the Crab, I have mainly been playing with using the javascript blocks to control the opening and closing of the claw. Simple routine below, controls the claw: open (and display an o on the microbit) or close the claw 9and display a c on the microbit), depending on whether button B or A is pressed.

It is fun, and works. Looking at the two boards though finding the pin numbers, etc to add motors access the sensors is where the real fun is going to begin.

Some ideas initial ideas for where next

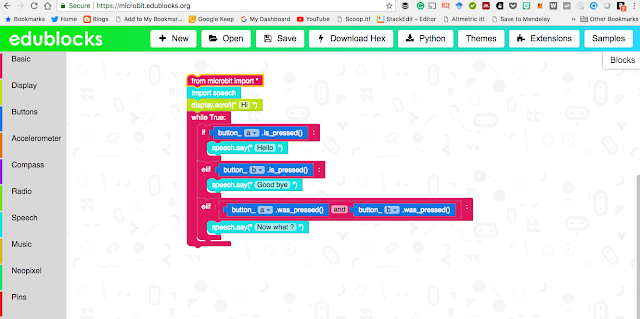

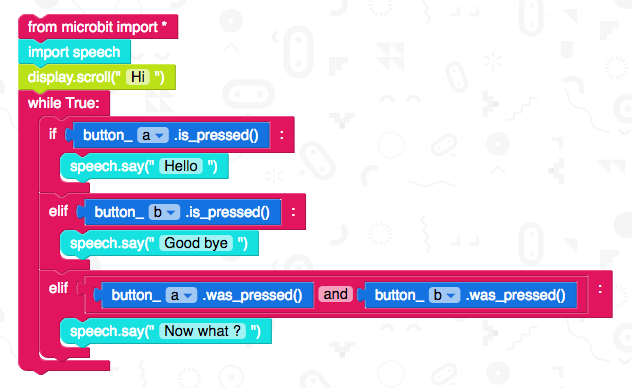

- Play with python to program it.

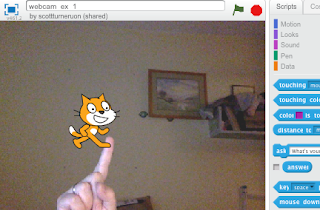

- The Vibramotor included may not be powerful enough to do the next idea - make it move by vibration. The sturdy structure means the stronger vibrations may needed to make it move. Nice thing about the kit is the construction is sturdy so it should be able to take the stronger vibrations by adding more larger vibrating motors (to see the kind of thing I mean see: https://medium.com/@scottturneruon/crumble-junk-eggbot-db0a1d02595f ). There is room on the power board for connecting motors.

- Getting the claw to react to light.

I am looking forward to playing with it a bit more!

All opinions in this blog are the Author's and should not in any way be seen as reflecting the views of any organisation the Author has any association with. Twitter @scottturneruon